One of the most deflating findings within social science over the past couple years is that social science itself is embroiled in a replication crisis. Lots of the all-star, big-time, TED talk–y findings are having trouble being re-found by other researchers, from the machinations of self-control to the success-bestowing capacity of “grit” to the power of posing.

The crisis is a reminder that Science isn’t some monolithic, unitary entity etching Truth into any number of fancy academic journals, but rather a professional field that actual humans go into and attempt to make a living in. In a new paper for Royal Society Open Science, University of California, Merced, cognitive scientist Paul Smaldino and Max Planck evolutionary ecologist Richard McElreath contend that it is academia’s cultural and economic structures that create the incentives whereby well-meaning researchers produce work with shoddy research methods and statistical procedures.

“My impression is that, to some extent, the combination of studying very complex systems with a dearth of formal mathematical theory creates good conditions for low reproducibility,” Smaldino told the Guardian. “This doesn’t require anyone to actively game the system or violate any ethical standards. Competition for limited resources — in this case jobs and funding — will do all the work.”

It’s a form of natural selection, they say: When professional fitness is defined by the number of publications that you rack up, it means that researchers and their labs will be given to optimizing for publishing broadly rather than researching deeply, and the methods that lead researchers to career success will spread through “progeny” in the form of graduate students in their labs or through other labs copycatting their successes, not unlike America’s college football coaches collectively falling in love with the spread offense.

“We term this process the natural selection of bad science to indicate that it requires no conscious strategizing nor cheating on the part of researchers,” Smaldino and McElreath write. “An incentive structure that rewards publication quantity will, in the absence of countervailing forces, select for methods that produce the greatest number of publishable results. This, in turn, will lead to the natural selection of poor methods and increasingly high false discovery rates.”

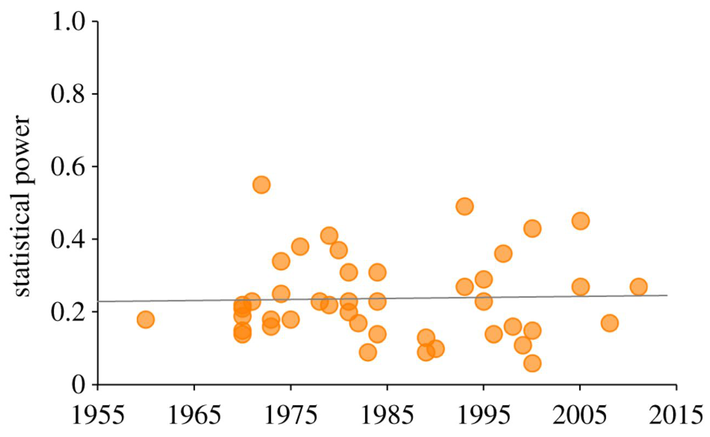

The authors do some fancy statistical work themselves, the most damning of which regards statistical power, or whether a given experiment has enough participants to not spit out false positives. Though the low levels of statistical power in social science has been called out since 1962, it hasn’t much improved. Putting together a 60-year meta-analysis, Smaldino and McElreath find that “statistical power shows no sign of increase over six decades,” as illustrated by the chart below.

The principal at work here may be Campbell’s Law, named for Donald Campbell, one of the 20th century’s most influential psychologists. In a 1976 paper, he observed that:

The more any quantitative social indicator is used for social decision-making, the more subject it will be to corruption pressures and the more apt it will be to distort and corrupt the social processes it is intended to monitor.

It’s important to note that in this case, it’s not the sense of “corrupt” like politicians taking bribes, but altering from its original intent or power, like a file on your computer getting corrupted. Campbell’s Law is a super-helpful tool for understanding why the world’s gone nuts, from the entrenchment of high-stakes standardized testing in education to clickbait journalism content to statistic-padding police work. Scientists, teachers, reporters, and cops are all humans, and they all respond to the structures of their environments, for better or worse, and an extreme emphasis on the quantitative can come at the cost of the qualitative. When the tail of the metric wags the dog of practice, bad things ensue.