Two weeks ago, Science of Us published my critique of the implicit association test, a Harvard-backed reaction-time-based task that supposedly measures your level of implicit bias against certain groups, but which is riddled with methodological and statistical problems. While the article mentioned glowing media coverage mostly in passing, focusing instead on the academic literature, overly credulous media coverage of the IAT has greatly helped to increase the popularity of the test, as well as to spread misunderstanding about it.

A good example of this came over the weekend, in an article entitled “How Unconscious Sexism Could Help Explain Trump’s Win.” In the article, Carl Bialik lays out the case for how IAT data about implicit sexism might offer some hints about how Donald Trump prevailed over Hillary Clinton. But if you look closely at the data and claims he evaluates, things get pretty muddled. This doesn’t mean implicit sexism didn’t affect the campaign, of course — it just means we should think twice before accepting that the IAT accurately measures implicit sexism and is a useful tool for explaining what happened.

The first thing to know about implicit-sexism IATs is that they follow a pattern not really seen in other areas of IAT research. Generally speaking, for IATs dealing with some oppressed class of people, nonmembers of that group score higher, and are therefore seen as more implicitly biased against the group. White people generally score higher on a so-called black-white IAT than black peoples for example, for example, while ethnic Germans generally score higher than ethnic Turks on IATs involving traditionally German and traditionally Turkish names (Turks are a marginalized minority group in Germany).

Sexism IATs are different. As Greg Mitchell and Phil Tetlock put it in a book chapter that is very critical of the IAT, “One particularly puzzling aspect of academic and public dialogue about implicit prejudice research has been the dearth of attention paid to the finding that men usually do not exhibit implicit sexism while women do show pro-female implicit attitudes.” This appears to be a pretty robust finding, and if you translate it into the same language IAT proponents speak elsewhere, it means men don’t have implicit sexism and are therefore unlikely to make decisions in an implicitly sexist manner (women, meanwhile, will likely favor women over men in implicitly-driven decision-making). Even weirder, when you switch to IATs geared at evaluating not whether the test-taker implicitly favors men over women (or vice versa), but whether they are quicker to associate men versus women more with career, family, and similarly gendered concepts, the IAT somewhat reliably evaluates women as having higher rates of implicit bias against women than men do.

After explaining that Trump voters tend to express higher degrees of explicit sexism, Bialik writes that “the tendency to associate men with careers and women with family” is “stronger on average in women than in men, and, among women, it is particularly strong among political conservatives. And at least according to one study, this unconscious bias was especially strong among one group in 2016: women who supported Trump.”

Bialik, who is a good and smart reporter who I read regularly, is cautious in his phrasing throughout the piece — at no point does he make explicitly overheated claims about the IAT. But there is some shakiness to the claim that any of the IAT data he presents makes a credible case for IAT-measured implicit bias playing a role in the election, and this is a useful case study in the broader journalistic conversation about the IAT, which tends to be a bit too solicitous toward questionable claims made by IAT proponents.

First, Bialik’s only direct support for the idea of a link between vote preference and IAT scores is a preelection study conducted by “HCD Research, a New Jersey research firm,” but the results of that study don’t really support a narrative in which IAT scores help us understand the election. Bialik relates the “striking” result that “[m]ore than 80 percent of [respondents who intended to vote for Trump] showed a bias toward linking men with careers more quickly than women, compared with 74 percent of women supporting Clinton and a little more than 50 percent of men supporting either candidate.” But if you follow Bialik’s own link over to the study, HCD Research notes that, “Interestingly, we found that study participants did not exhibit gender stereotype associations, regardless of who they plan to vote for (Clinton, Trump, or third party).”

Bialik explained to me that this simply had to do with how HCD Research broke down the subgroups in its publication versus the more granular way they did so for him. He pointed me to the firm’s staffer Michelle Niedziela, and she confirmed this to me in an email. “Nobody was gender biased and there were no significant differences across parties,” she wrote. “However, when we began to dig a bit deeper we found that women held a low level of gender bias while men did not. Looking at the Clinton men and women, we found they had no gender bias. Trump women, however, were the only group to show low levels of gender bias, with Trump men just missing the cutoff (.2 [on the scoring scale]).” And Bialik’s 80 percent and 74 percent figures include anyone who scored positively on the scale, even if they were below the firm’s own cutoff for a meaningful positive score.

Long story short, one subgroup in the HCD experiment exhibited a “low” level of gender bias against women, while none of the others exhibited a meaningful amount, by the firm’s scoring convention. Is this “striking,” or worth pointing to as even a potentially interesting explanation for the cognitively and sociologically complicated act of choosing whom to vote for? Would we be talking about it at all if we weren’t in the midst of an age of rampant, frequently overheated excitement about these nifty tests?

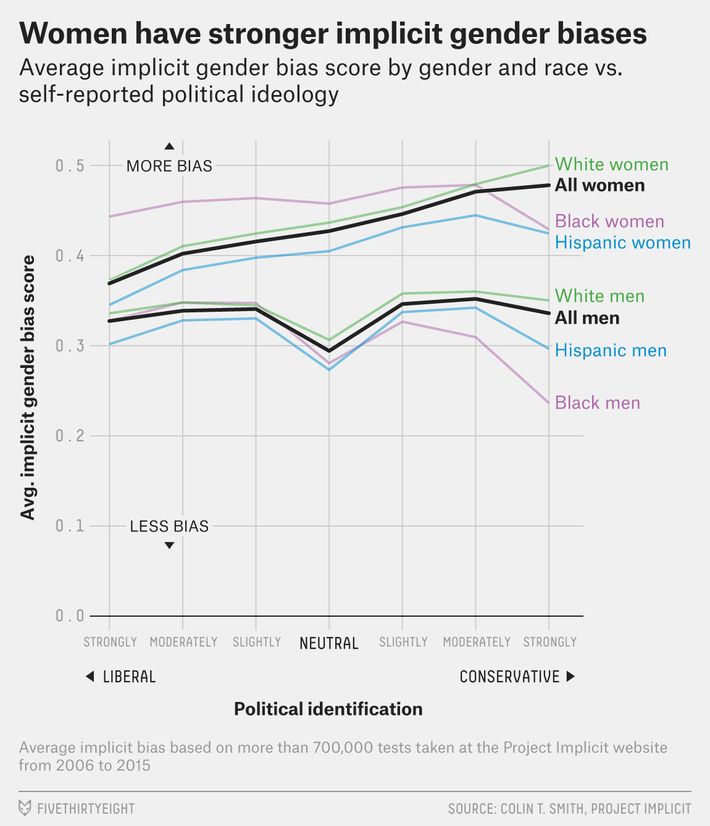

This chart from the FiveThirtyEight piece, drawn from data generated by Harvard’s Project Implicit website, also stands out:

Keep in mind that we’re evaluating a couple claims — not settled facts — here. The claims in question are that sexism IATs (1) accurately measure implicit sexism, and (2) that there’s some good reason to think that data generated from these scores might tell us something interesting about voting behavior.

We’re off to a rocky start given that, as noted above, the gender-stereotype IAT data follows, basically, the exact opposite pattern as racism IAT data, with members of the marginalized group scoring higher. And yet we’re being asked to nod along with the same story line that high IATs predict biased behavior against the out-group or marginalized group. That is, we’re being asked to believe that strongly and moderately liberal women are, all else being equal, more likely to hold implicitly sexist associations than far-right men, and therefore — by the logic of both IAT proponents and Bialik’s article — more likely to act in an implicitly sexist manner in the voting booth and other contexts. Again, if these aren’t the claims, then what’s the point of any of this? We wouldn’t care about these scores or this graph if they didn’t come accompanied by the claim, or at least the possibility, that all this data maps onto actual real-world bias and real-world behavior.

Is it possible that liberal women are more implicitly sexist than far-right men? I … suppose? The IAT narrative gives itself an ‘out’ here by claiming that the IAT taps into implicit beliefs that are unconscious and totally separate from our explicit beliefs. But at a certain point, common sense has to kick in: There’s a lot of research showing that strongly conservative men (and women, too) have traditional, rigid notions about where men and women “belong.” There’s no residue of this in implicitly held beliefs? Liberal women really believe, in their deep-down unconscious, that women belong in the kitchen more than conservative men and women do? In light of the numerous problems that have been uncovered with the IAT, Occam’s razor suggests that “this test is less meaningful than we thought” is a more justifiable conclusion.

Bialik, for his part, was very responsive to my queries, even after I explained I was going to be criticizing his article. And he made some fair points. “These are preliminary results, worth treating with caution and following up with further research,” he said via DM. “I don’t think we should discount them automatically because the results are counterintuitive, or seem different at first glance than results of tests for other kinds of implicit bias. This gender test is fundamentally different from the typical tests of implicit racial bias, which test associations between racial groups and positive or negative words. There isn’t anything inherently positive or negative about family vs. career, the subjects of this test; it’s about how quick people are to associate men or women with family-related words relative to career-related words.”

Sure — a result being counterintuitive definitely isn’t reason enough to discard it. But it just feels like the problem with IAT coverage goes deeper than that, that something about how sexy the IAT is, and how much promise it offers (on paper), seems to short-circuit certain forms of question-asking. There is a lot of confusion about what the IAT can and can’t do, and a lot of claims about it don’t quite seem to be falsifiable. It’s telling that if you flipped the above graph on its head — if men were more implicitly biased against independent and career-oriented women than women were at every point on the political spectrum — you could also write an article about how IAT scores might help explain voter behavior: “This test shows that, as we might expect, men are implicitly biased against the notion that women should have careers and be independent, which can help explain why they voted for Clinton in such lower numbers.”

A small but telling moment comes at the conclusion of Bialik’s piece:

HCD would like to do follow-up studies on the topic, including tracking bias levels over time and examining whether exposure to certain segments of the media affects bias. Michelle Niedziela, scientific director of HCD Research, said she initially found the finding surprising but has since rethought her position.

She recounted a conversation she had after the election with a woman she knows who had voted for Trump. “She was saying things I found to be really shocking, that I had never heard before,” Niedziela said. The woman, a professional, told Niedziela that she didn’t think men and women should get equal pay. “This study reflects these beliefs; they are out there,” Niedziela said. “Maybe we shouldn’t be shocked.”

But that’s an explicit belief! An IAT study is very much not supposed to “reflect” an explicit belief. The entire conceit of the test, in fact — and the justification for its widespread popularity and adoption — is the claim that it can capture implicit beliefs, which are separate. The fact that we’re two decades into the history of these tests, and even the people who get paid to administer and study them seem a bit shaky about exactly what they’re measuring, should tell us something about the quality of the underlying instrument.