Late in January, the researchers Jordan Anaya, Nick Brown, and Tim van der Zee identified some fairly baffling problems in the research published by Cornell University’s Food and Brand Lab, one of the more famous and prolific behavioral-science labs in the country, and published a paper revealing their findings. As I wrote last month, “the problems included 150 errors in just four of [the] lab’s papers, strong signs of major problems in the lab’s other research, and a spate of questions about the quality of the work that goes on there.”

Brian Wansink, the lab’s head and a big name in social science, was a co-author on all those papers, and refused to share the underlying data in a manner that could help resolve the situation, though he did announce certain reforms to his lab’s practices, and said he would be hiring someone uninvolved with the original papers to reanalyze the data. Wansink, whose lab is known for producing a steady stream of catchy, media-friendly findings about how to nudge people toward healthier eating and habits in general, has also openly admitted to a variety of data slicing-and-dicing methods that are very likely to produce misleading and overblown results.

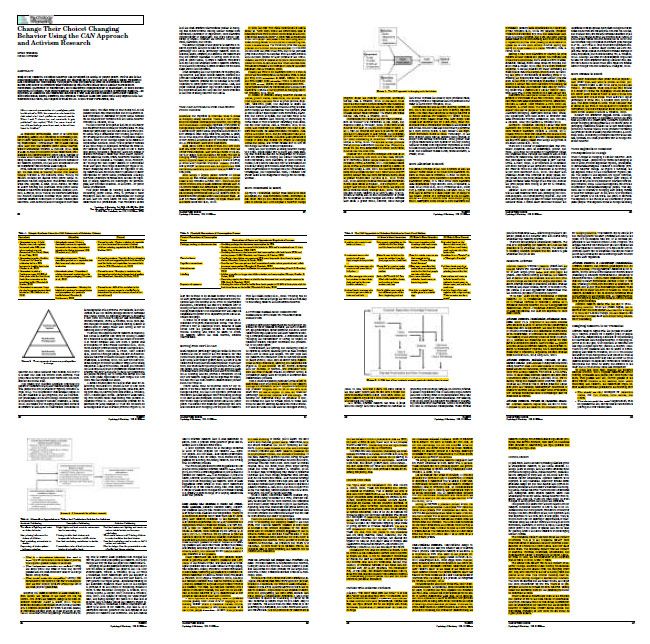

Wansink’s problems just got a lot worse. Today, Brown, a Ph.D. student at the University of Groningen, published a blog post highlighting many more problems with Wansink’s research practices. First, it appears that over the years, Wansink has made a standard practice of self-plagiarism, regularly taking snippets of his text from one publication and dropping them into another — a practice that, while not as serious as outright data fraud or plagiarizing someone else’s material, is very much frowned upon. And sometimes it was more than “snippets.” Brown includes the following image of one Wansink article in which all of the yellow material (plus three of the four figures, which Brown said he couldn’t figure out how to highlight) is lifted from Wansink’s own previously published work:

In another instance, Brown writes, Wansink appears to have published the same text as two different book chapters at around the same time. “Each chapter is around 7,000 words long,” he writes. “The paragraph structures are identical. Most of the sentences are identical, or differ only in trivial details.”

Brown both offers a compelling case that this sort of self-plagiarism was a pattern for Wansink, and that he may have engaged in more serious misconduct as well. Summing up Brown’s findings in The Guardian, Chris Chambers and Pete Etchells write:

… Brown also alleges a more serious case that, at a minimum, suggests an exceptional coincidence, but may point to duplicate publication of data, or might also indicate data manipulation. Brown purports to show that two studies published by Wansink in 2001 and 2003 present uncannily similar results, with 39 out of 45 outcomes identical to the decimal point, despite being drawn from different samples. In the 2001 study, Wansink reports recruiting “153 members of the Brand Revitalisation Consumer Panel” while in the second study the reported sample consisted of 654 respondents to a nationwide survey “based on addresses obtained from census records”. How such similar results could emerge from two distinct studies, and two distinct samples, remains unexplained. At the time of publication, Wansink had not responded to requests for comment. A query to the Cornell University Office of Research Integrity and Assurance resulted in a reply from Finn Partners PR Firm, pointing to a statement from Wansink that did not address the most recent allegations.

It’s important to note that Wansink published these studies before coming to the Food and Brand Lab, but still — this is entirely bizarre. It’s a really, really hard thing to explain, and Occam’s razor doesn’t point to any explanations that don’t involve, at best, negligence that would likely derail the careers of most young researchers, and at worst outright data fraud.

It’s now even more urgent for Wansink and Cornell to offer up a meaningful response to this steady drumbeat of serious allegations. As I argued last month, 150 errors in four papers would on its own be a reason not to trust anything produced by the Food and Brand Lab — not until Wansink can explain exactly what happened. Now, though? What possible reason is there to trust this lab’s output at all, let alone for journalists to continue to publicize its findings?

Up to this point, it appears Cornell has given Wansink near-full discretion over how to handle all this. “While Cornell encourages transparent responses to scientific critique, we respect our faculty’s role as independent investigators to determine the most appropriate response to such requests, absent claims of misconduct or data sharing agreements,” John J. Carberry, the university’s head of media relations, said in a statement emailed to Science of Us last month.

That sentence has not aged well. Maybe it’s time for Cornell to seize the reins rather than act as though what’s going on here is just normal scientific back and forth that Wansink can address on his own. Until the university’s administrators do, this growing scandal will continue to inflict serious damage on Cornell’s reputation as a research university.