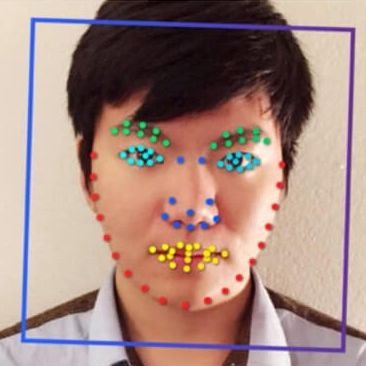

A recent Stanford University study published in the Journal of Personality and Social Psychology claimed artificial intelligence can figure out if a person is gay or straight by analyzing pictures of their faces. However, the Outline reports the study was met with “immediate backlash” from the AI community, academics, and LGBTQ advocates alike — and the paper is now under ethical review.

The study found that an algorithm could guess a person’s sexual orientation after analyzing pictures of faces — and that the technology was accurate 81 percent of the time for men and 74 percent of the time for women. The authors claimed that their findings therefore provided “strong support” for the idea that sexual orientation stems from hormone exposure in the womb.

Yet, the Outline reports that critics of the paper questioned the study authors’ methodology, their conclusions, and the fact that the study included no people of color. Per the Outline:

The paper, titled “Deep neural networks are more accurate than humans at detecting sexual orientation from facial images” is now being re-examined, according to one of the journal’s editors, Shinobu Kitayama. “An ethical review is underway right at this moment,” Kitayama said when reached by email. He declined to answer further questions, but suggested the review’s findings would be announced in “some weeks.”

As Motherboard reports, an associate professor of sociology at Oberlin College, Greggor Mattson, claimed in a blog post that the paper was merely the “most recent example of discredited studies attempting to determine the truth of sexual orientation in the body.” Meanwhile, University of Maryland sociology professor Philip N. Cohen alleged the paper was plagued by methodological problems, and that he believes the study authors misinterpreted the results.